Context

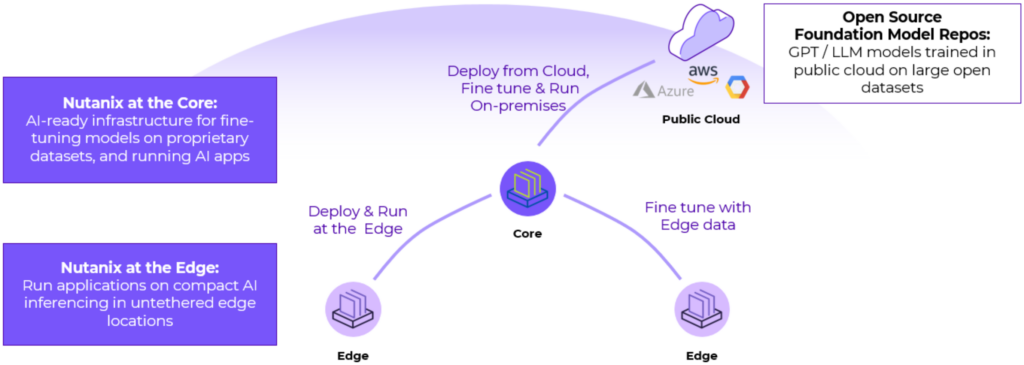

In the past, the Hyperscalers have always been positioned as the default/ “you can’t go wrong” deployment zone for AI/ML workloads. But this is not true for many use-cases, see Why AI and machine learning are drifting away from the cloud.

Real-world examples from many ISVs and customers tell a different story with multiple drivers: low-latency, data privacy and cost (especially at scale) that make the Core DC (owned or hosted) a better choice. With leading market analysts agreeing on explosive data growth at storefront/ branch/ customer service center locations, for Edge AI/ML use cases, on-prem deployments are a ‘no-brainer’.

Nutanix advantages for Edge AI/ML

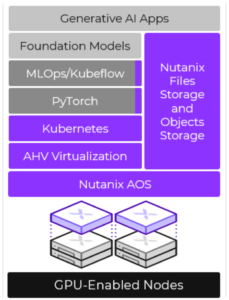

Nutanix delivers right-sized solutions for Edge AI/ML use cases for verticals such as Retail, Healthcare, BFSI and Utilities. Built around the Nutanix GPT-in-a-Box™ design, six advantages of Nutanix over hyperscaler edge offerings include:

- Data security & Cost: Single socket models at the entry-level along with the Nutanix qualified open-source stack to run inferencing at the edge, with training/learning models in the public cloud.

- Edge-friendly form-factors: Compact/ wall-mountable, ruggedized, GPU (e.g. Nvidia® T4s) capable platforms such as Lenovo® HX1021 ~ no need for separate DC rack/data room.

- Workload resiliency: Ability to work in a completely ‘disconnected’ mode ~ increasing edge workload resiliency.

- Multi-workload support (VMs, containers): Single platform to run both containerized workloads (e.g. AI/ML) and traditional VMs, and native unified storage (NFS/ SMB/ Objects) so (in the retail case) can support applications such as: POS, Security, Video monitoring, inventory, etc.

- Ability to leverage hyperscaler Kubernetes® management tools to provision/ manage deployments using the native Nutanix Kubernetes Engine™ (NKE) ~ we support Azure Arc™, Google Anthos®, and Amazon EKS Anywhere™ solutions.

- Zero-touch remote deployment to hundreds of sites in parallel using Foundation Central™ cluster-creation software and Nutanix Cloud Manager™ (NCM) Self-service orchestration.

- Zero-downtime scalability: Adding nodes (Nutanix supports heterogenous configurations within a cluster) requires no service downtime.

- Performance: Running inferencing at the Edge on the Nutanix Cloud Platform™ infrastructure delivers significantly lower latency and higher performance for demanding workloads when compared to the public cloud deployments.

Retail Example

Putting these advantages into perspective, for a retailer with 2000 stores ~ you may have AI/ML apps (smart checkout, etc) running at say, 700 of the larger/high revenue stores, and only general apps at the other 1,300. With a Nutanix® deployment, you will still have a single management plane across ALL stores AND be able incrementally add AI/ML capability by just adding a GPU enabled node at the desired store ~ no management layer change needed and no downtime: Faster time to value and lower TCO.

Finally, if needed, we can deliver the full ‘as-a-service’ model through Lenovo ~ Edge hardware, GPUs, Nutanix licenses, remote management and support, allowing the customer to scale from zero to thousands of locations effortlessly without high capex investments.

Our Global System Integrator (GSI) partners can leverage their domain expertise and help fine-tune AI/ML models to your industry and datasets.

This post may contain express and implied forward-looking statements, which are not historical facts and are instead based on our current expectations, estimates and beliefs. The accuracy of such statements involves risks and uncertainties and depends upon future events, including those that may be beyond our control, and actual results may differ materially and adversely from those anticipated or implied by such statements. Any forward-looking statements included herein speak only as of the date hereof and, except as required by law, we assume no obligation to update or otherwise revise any of such forward-looking statements to reflect subsequent events or circumstances.